|

In addition, Kafka ships with Kafka Streams, which can be used for simple stream processing without the need for a separate cluster as for Apache Spark or Apache Flink.īecause messages are persisted on disk as well as replicated within the cluster, data loss scenarios are less common than with Flume. Data spikes and back-pressure (fast producer, slow consumer) are handled out-of-the-box. Kafka can be used for event processing and integration between components of large software systems. Kafka now comes in two flavors: the “classic” producer/consumer model, and the new Kafka-Connect, which provides configurable connectors (sources/sinks) to external data stores. Compared to Flume, Kafka offers better scalability and message durability. Messages are organized into topics, topics are split into partitions, and partitions are replicated across the nodes - called brokers - in the cluster. Kafka is a distributed, high-throughput message bus that decouples data producers from consumers. For high availability (HA), agents can be scaled horizontally. Flume does offer scalability through multi-hop/fan-in fan-out flows. Even then, since data is not replicated to other nodes, the file channel is only as reliable as the underlying disks. A file channel will provide durability at the price of increased latency. For instance, choosing the memory channel for high throughput has the downside that data will be lost when the agent node goes down. It is easy to lose data using Flume if you’re not careful. Flume is configuration-based and has interceptors to perform simple transformations on in-flight data.

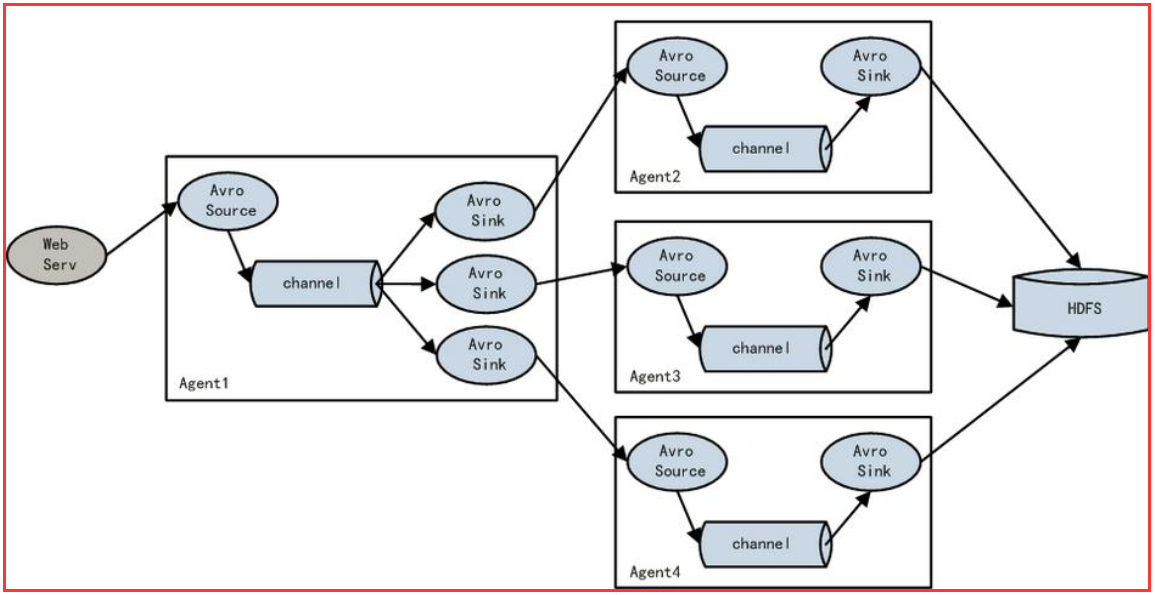

It comes with many built-in sources, channels, and sinks, for example, Kafka Channel and Avro sink. Agents can be chained and have each multiple sources, channels, and sinks.įlume is a distributed system that can be used to collect, aggregate, and transfer streaming events into Hadoop. A sink can also be a follow-on source of data for other Flume agents. Flume clients send events to the source, which places those events in batches into a temporary buffer called channel, and from there the data flows to a sink connecting to data’s final destination.

The Flume Agent is a JVM process that hosts the basic building blocks of a Flume topology, which are the source, the channel, and the sink. Apache FlumeĪ Flume deployment consists of one or more agents configured with a topology. All three products offer great performance, can be scaled horizontally, and provide a plug-in architecture where functionality can be extended through custom components.

In this article, we’ll focus briefly on three Apache ingestion tools: Flume, Kafka, and NiFi. will all come into play when deciding on which tools to adopt to meet our requirements. Preliminary considerations such as scalability, reliability, adaptability, cost in terms of development time, etc. When building big data pipelines, we need to think on how to ingest the volume, variety, and velocity of data showing up at the gates of what would typically be a Hadoop ecosystem.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed